Hyperthermia by Design

AI companies are erecting massive factories across the US. In their race for scale, they are converting gigawatts of electricity into heat, which is often released into the air that may already be at above average temperatures. While these facilities consume enormous amounts of energy to output often half-baked mimicry of thought and expression, in physical terms, their output is almost exclusively heat.. This is the reality of generative AI data centers. Half-baked ideas aren’t unusual. In fact, they’ve been common for as long as there have been people. But that activity had not previously been an intrinsic threat to our biome. The leading thinkers in AI believe that it calls for improvement (aka outdoing the competition) through sheer scaling. This means training new models on ever larger datasets purporting to contain ever-growing amounts of human knowledge, and letting those results inform how large the next datasets must be. This effort started in valid science, but carrying it forward while disregarding the literal exponential growth of resources consumed, resources which are finite in their supply, mistakes hubris for curiosity.

I must clarify that AI is decades old and in widespread use with clear benefits. Innovations in neural networks in the late 1970s saw steady, brisk progress in their sophistication. This led to famous events like Deep Blue’s chess victory in 1997, autonomous navigation on NASA’s Spirit and Opportunity Mars rovers, and Apple’s release of Siri in 2010. In the 2010s, medical science started using AI extensively to analyze imaging scans, identifying otherwise unobservable features for early diagnoses of cancer and other disorders. What is concerning is that modern generative AI is bringing severely dystopian vibes. Large Language Models (LLMs) crank out libraries of uncanny resemblances to text sprinkled with deeply concealed nonsense and factual errors, while image generators churn through vital chores such as inserting Where’s Waldo into live-action Hieronymus Bosch triptychs. Generative AI consumes prodigious resources since it’s tasked with creating large amounts of information, as opposed to other AIs that effectively make discrete decisions with a more limited amount of information. Training the new models calls for datasets in the trillions of words, and it is during training where data centers will typically use the most resources. Even still, this AI could be a beneficial tool if its environmental impact were abated with renewables and appropriate siting. Doctors and licensed clinicians spend up to half their working hours writing medical records, which even current technology could alleviate with a HIPAA-safe bot listening in during patient visits. Likewise, overworked public defenders could have a bot distill essential info about their clients in time to adjudicate fairer outcomes. Instead, the big AI money is in replacing such highly skilled (and paid) professionals with bots driven by ambivalent prompt engineers, preferably phoned in from a country with cheaper labor. The surveillance industrial complex is also surfing AI’s rising tide to devise lucrative, quasi-legal ways to deprive us of insurance coverage, affordable rents, and even our freedom.

Scientists predict the electricity used by our gamble on generative AI will make it the 5th largest global power consumer in 2026. At 1050 terawatt-hours, this places AI’s resource demand somewhere between that of Japan and Russia. Typical construction means almost all of this energy is converted by the silicon chips, power conversion equipment, and cooling systems into heat. This heat can’t accumulate inside, and capturing or at least storing heat for delayed release is a rare voluntary industry practice due to the high cost. So, most of the energy entering a facility as electricity must leave in the form of waste heat, whether vented to the outside air or piped offsite for district heating. Releasing the heat into the air is by far the most common cooling method, being the cheapest. It comes in 2 types: open-loop, where up to millions of gallons of water are evaporated daily, and closed-loop, where air is vigorously blown over coils carrying coolant recirculated back to the electronics. Open-loop needs less power, i.e., more cost-efficient, but this comes at the expense of literal rivers of drinking water.

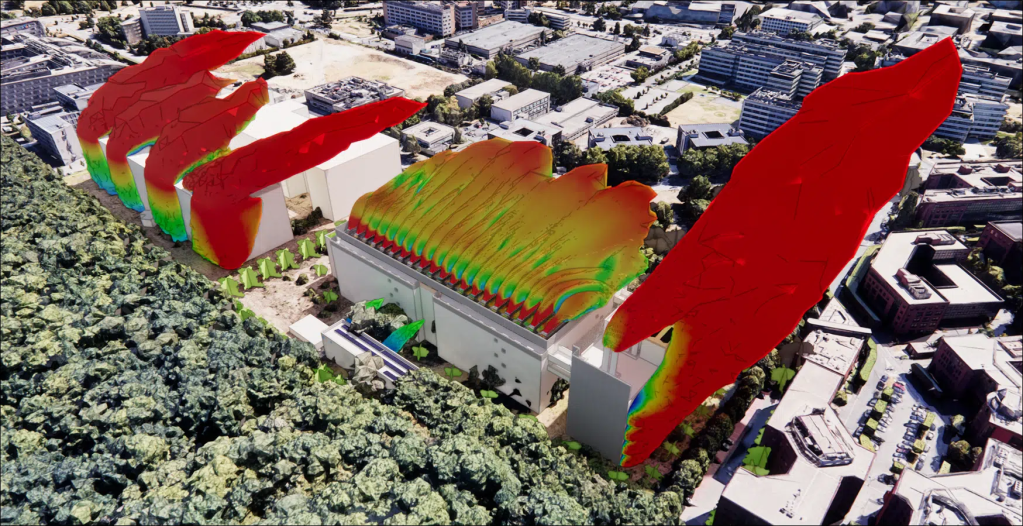

The hot air exhaust from AI data centers rises as plumes and contributes to the creation of heat islands, similar to other industrial facilities. Besides exposing nearby people to higher temperatures, heat islands are direct contributors to climate change, and they can cause urban air pollution to dangerously accumulate by helping thermal inversions form. Modeling the severity, size, and dispersion of a particular site’s heat island is very complex, given the effects of weather and building geometry. But overall, the excess heat felt by someone near the site will be related to the density of heat released per square meter over the site’s footprint, although this release will also fluctuate based on the site’s recent power usage. This extra heat atop natural temperatures will be the worst on hot summer evenings and nights with stagnant air. That linked study by the French engineering company EOLIOS describes elaborate methods for easing a data center’s extreme heat effects, yet these methods don’t alter the fact that all heat created inside the center will eventually have to be pushed outside during warm months. Heat simply doesn’t disappear. American data centers, as a rule, have only the absolute minimum legal heat mitigations. Sometimes slightly less.

Current generation hyperscale AI data centers can devote roughly 80% of their total power to the electronics, the “IT load,” with Google facilities reporting over 90%. This is notable because such usage isn’t affected by improvements in cooling efficiency. It’s important to clarify that a data center is not a literal 1200 watt incandescent light bulb. Its power draw will fluctuate following the demands of its load, including down to near zero, similar to how a laptop fan speeds up and down during gaming. Unlike mere mortal gamers, hyperscale data centers will intentionally schedule their jobs to maintain full hardware commitment whenever possible, and thus the highest feasible power consumption. The perverse economics of AI, with projected 1-to-3-year GPU lifespans, means such facilities may lose more money each year through depreciation of their silicon than what they pay for utilities. This makes every minute below peak potential revenue lost.

These circumstances commit hyperscale data centers towards converting all electricity available into waste heat that will be released into the air, with expediency usually meaning substantial fossils were burned too. Utility companies are fully onboard, eager to overbuild to the misery of all but their shareholders. Financial sustainability of generative AI in its current form assumes exponential adoption, like when the Internet was introduced in the 1990s. This assumption simply does not comport with the observable usefulness of AI right now, nor what can be reasonably extrapolated into the immediate future, if ever. Vendors systematically overstate their products’ capabilities, often forcing unwitting customers to hire (or re-hire) human staff to correct errors made by their new AI tools. Substantial improvement in AI quality will certainly occur, and is it quite plausible such tools will eventually enjoy ubiquitous adoption.

Currently hopes of an inflection towards AI exceeding human capabilities (several have come and gone), aka the singularity, is having the AI models themselves train their successors for even grander cash prizes. However, these promises of prosperity and long-term tax revenue for gullible municipalities still require exponential adoption. Anything less would prevent these vendors from paying off the massive debts they’re accumulating during the digital infrastructure buildout. That is, anything less means bankruptcy for all but NVIDIA, Google, and Microsoft.

| Project Name | Location | Power Rating (MW) | Acres | Heat Density (W/m^2) |

| Armory Innovation District | St. Louis | 120 | 11 | 2696 |

| Midday sun | 1000 | |||

| Project Cumulus (canceled) | St. Charles | 1200 | 440 | 674 |

| Beltline Energy | Pacific, MO | 1200 | 500 | 593 |

| Nebius Independence Campus | Jackson, MO | 800 | 398 | 497 |

| Cloverleaf Infrastructure | Troy, IL | 500 | 300 | 412 |

The proverbial brass ring above this madness is Artificial General Intelligence (AGI) possessing unambiguously superhuman competency with anything thrown at it. AGI is understandably expected to be a money-printing machine, to whatever extent money still makes sense at that point. But this prompts the question whether the benchmark truly is AGI, or rather, whether society’s biggest economic players are deciding they want mass layoffs regardless. Are they really so certain they would be personally insulated from the boiling climate upheaval heralded by their new army of chronically confused bots?

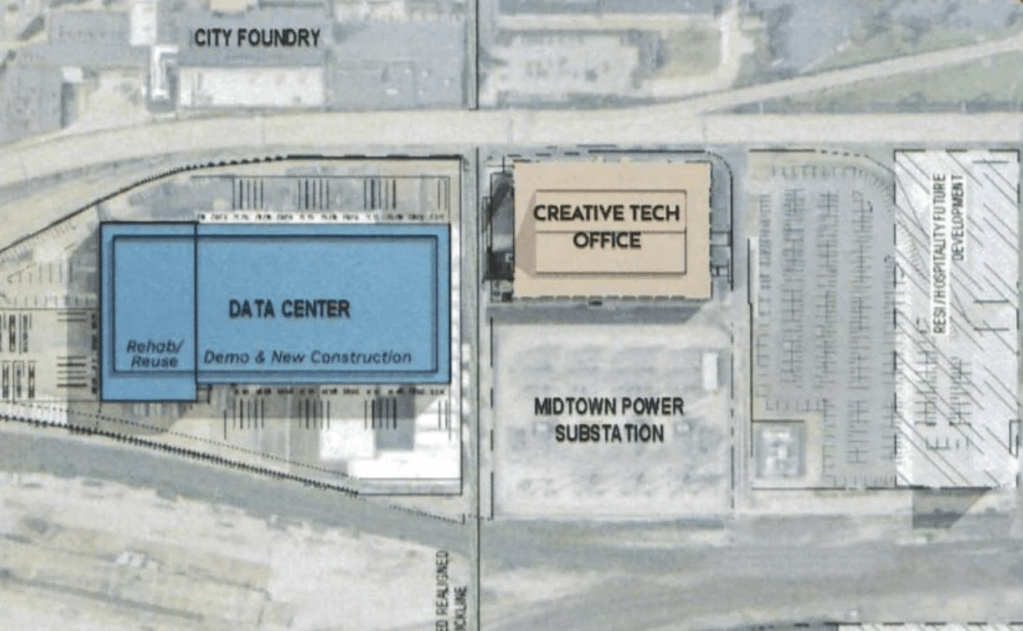

So what does all this mean for St. Louis? The table above lists some large projects at varying stages of proposal or planning in the Bi-State area. Most are in rural or suburban areas, each occupying hundreds of acres and rated for up to a gigawatt. Ameren UE has taken its cue and just secured approval for an 800 megawatt, natural gas power plant for data centers, which we will have to help pay for, thanks to the recently passed SB4. Responsive regulations (when present) appear to only touch on building height, noise, and light pollution. There are no limits on heat release or any mandated heat island abatements.

That table entry for the Armory Innovation District in St. Louis should stick out. Its tiny 11-acre footprint means its cooling plant would expel heat at a density several times higher than all other data centers shown, promising a compact yet mighty heat island. I must again clarify that the power figures in the table are the centers’ built capacity, so the true power usage will thus be lower and fluctuate rather quickly. Still, St. Louis presently has no current code to mitigate the worst heat-related excesses of these AI data centers. In addition, the Armory proposal specifically seems to be exploiting its location in a special SLU 353 redevelopment zone to claim exemption from future regulations. That is, placement in this particular area comes with an exemption from mandated renewable energy and environmental studies for dangerous heat effects. Regarding the latter, the proposed Armory site sustaining release of only 50% of its capacity as heat, with its cooling plant running at full tilt during a heat wave, would be 135% of maximum solar irradiance. That is 135% the amount the midday sun heats the ground. Researchers have observed solar farms causing up to 7°F heat islands, and they inherently reflect only a fraction of this irradiance as heat. So the Armory site could multiply that effect, with inadequate dispersion bringing infernal conditions to immediate neighbors like the Foundry, its own office tenants, and even drivers stranded in traffic jams on the elevated interstate. Anyone living nearby should be concerned about the impact on their quality of life.

I hope this article makes clear that the singularity-or-bust economics of AI data centers means any voluntary commitments by builders to respect their neighbors and the environment can not be trusted. You are not angry enough with elected officials who are taking these builders at their word.

Ben West is a computer engineer and serial small nonprofit board member whose persnickety attention to trivial details is tolerated most of the time.